Video compression solutions company V-Nova recently conducted a study with Silicon Valley tech giants Intel and Meta Platforms. The technology is a key tool for applications employed in broadcast and audiovisual (AV), streaming platforms and social media, and extended reality (XR) and the Metaverse.

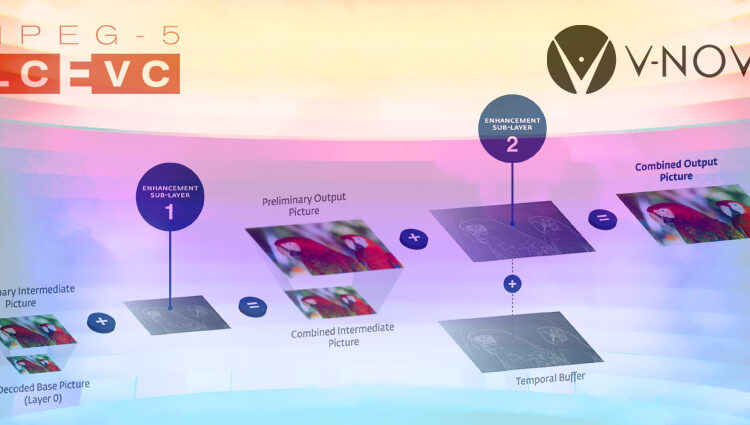

The International Society for Optics and Photonics (SPIE) published the study, which outlines how the London-based enterprise’s MPEG-5 Low Complexity Enhancement Video Coding (LCEVC) improves encoding and compression rates for x264, x265, and SVT-AV1 codecs.

Researchers tested more than 650,000 encodes across eight resolutions, multiple bitrates, 25 presets, and 140 self-similar clips, using VMAF, VMAF_NEG, SSIM, and PSNR visual quality metrics.

LCEVC greatly boosted AV1 decoding capabilities as well as battery life for mobile devices, the study found, citing improvements in several key metrics.

LCEVC Improvements

According to the study, testing noted improvements in:

- Compression performance: Testing utilised dynamically optimised convex-hull methods for VOD encoding for streaming and social networking platforms.

- Computational performance: LCEVC results showed similar levels of quality and performance with a nearly 40 percent reduction in computations with SVT-AV1, boosting energy efficiency.

- Decodability for AV1: The study found that LCEVC expanded the number of mobile devices that could play full HD AV1 content. It also boosted battery life up to 50 percent compared to non-LCEVC streams with AV1 software decoders Dav1d and GAV1.

Chart of LCEVC encoding times compared to non-use with existing coding technologies. PHOTO: V-Nova

Guido Meardi, Chief Executive of V-Nova, said,

“The results published by SPIE have been the results of an amazing effort between all parties, leveraging on the knowledge, experience and methodology of Intel and Meta, to finally provide a set of quantitative results of the benefits of MPEG-5 LCEVC in a very relevant use case”

LCEVC and XR

V-Nova notes that immersive videos depend on such high-performing codecs to deliver optimal experiences while people are using virtual, augmented, and mixed reality (VR/AR/MR) headsets.

Their solutions could potentially allow people to use integrated services such as Microsoft Teams and Zoom on the new Meta Quest Pro, or TikTok streaming on the new Pico 4 and Pico 4 Enterprise.

YouTube and other streaming platforms will also benefit from such technologies, allowing viewers to watch videos with higher resolutions at 4K and 8K, lower latency, and sharper contrasts.

In a recent blog post, the company wrote,

“Advanced codecs, such as AV1 or VVC, could provide material benefits to address the bandwidth issues, but they are not viable from the encoding and decoding standpoint, at least for the foreseeable future. In addition, when 8K hardware decoding with those codecs does become available, it is very likely that we will need resolutions four times as high or more to take full advantage of the display capabilities of the VR devices that will be available by that time”

Combining efforts from hardware manufacturers designing cutting-edge headsets and software solution firms developing codecs, applications, and experiences will allow immersive firms, as well as enthusiasts, to benefit the most from such innovative tools.

Similar companies have launched solutions to tackle ongoing challenges with pro-AV, broadcast, and XR technologies. XR Today recently spoke with AMD-Xilinx at the Integrated Systems Europe (ISE) 2022 in Barcelona.

At the event, the company explained how their tech innovations greatly improved pro-AV, broadcasting, and metaverse technologies to boost infrastructure for future solutions.

The firm’s Zynq UltraScale+ Solution is a dedicated system-on-a-chip (SoC) tool for 4K, 60fps video codec units with 10-bit H.264 and H.265 capabilities, leading to minimum video latencies